February 9, 2024

Cloud

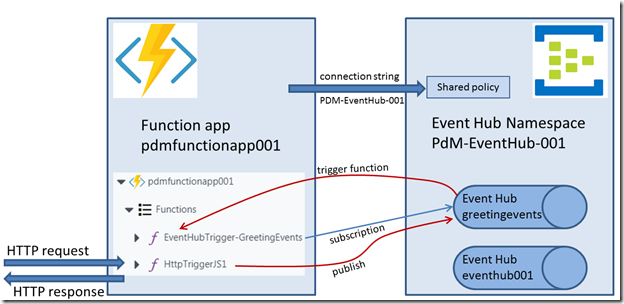

As reported by Microsoft: “Starting at 01:57 UTC on [Sunday] 21 January 2024, customers using Azure Resource Manager might experience errors when trying to access the Azure Portal, Azure Key Vault and other Microsoft services.“

My initial reaction was: well, that is unfortunate. But the main role of ARM for us is during provisioning of resources or some change in configuration so we do not expect immediate impact on any running applications. Azure’s status page that indicates service health did not report any issues with other services besides the ones mentioned here.

That however as misleading. We were lulled into a false sense of security. As Microsoft later put it: “This also impacted downstream Azure services, which depend upon ARM for their internal resource management operations.” It turns out that many Azure services leverage ARM internally for their successful operations. And the effects could be felt for us in for example event grid, storage and functions as well as in widespread connectivity issues. The problems were globally experienced: zone redundancy, regional fail over or any other fall-back scenario within Azure did not shield us from the impact.

This issue lasted until 8.58 UTC (at least for the West Europe Region which was the last to recover). Seven hours of loss of service. Not all services but quite a few. Impact for dozens of our customers. Long shifts during the night and early Sunday morning hours. And not a lot to go on. No meaningful responses to support tickets, unclear communication and no indication of the (underlying) issue or the resolution. And still a misleading message regarding the impact. Based on the information we had, we could do little more than inspect and activate circuit breakers and inform our customers with the little information we had. Only for those systems where we have hybrid cloud solutions and a private cloud/on prem fail over facility could we trigger manual or monitor automated fail over and continue (partial) service levels.

What happened? This article provides a nice overview of the events as reported afterwards by Microsoft. A short summary:

- an internal maintenance process on January 21st 2024 made a configuration change to an internal tenant which was enrolled in a preview (that had started in 2020).

- this preview contained a bug (“unbeknownst to us”)

- the bug was activated by the configuration change, causing an ARM node to fail repeatedly upon startup. (01:57 UTC)

- all ARM nodes are scheduled to reset/startup periodically (to protect against accidental resource exhaustion such as memory leaks)

- apparently – and here Microsoft’s report is unclear) the bug spread to ARM nodes across the globe, causing a gradual loss in capacity to serve requests. Over time, this impact spread to additional regions, predominantly affecting East US, South Central US, Central US, West Central US, and West Europe. Eventually this loss of capacity led to an overwhelming of the remaining ARM nodes, which created a negative feedback loop and led to a rapid drop in availability.

- at 04:25 UTC, the cause of the problem was understood and at 04:51 roll out was started of a configuration change to disable the preview feature. At 05:30 UTC – All regions except West Europe were recovered. At 08:58 UTC, all ARM nodes were up and running again also in West Europe (the region where most of our services run). Note: The recovery of West Europe was slowed because of a retry storm from failed calls, which intensified traffic in West Europe. We increased throttling of certain requests in West Europe which eventually enabled its recovery by 08:58 UTC

What stands out here is first of all a bug in production code. Can happen to anyone. And also to the people running Azure. It is a little disconcerting but should not be wholly unexpected. More worrying I think is the fact that this configuration change applied by an internal maintenance process apparently had not been tested (on an ARM node that was part of the 2020 preview) and could be rolled out in production. Why was there no check? And then even worse: for some (until now unclarified) reason, this change could spread around the world (for quite some time after the first deterioration of service levels had been detected). Given the scale of the issue, I am somewhat impressed with the way how the issue was resolved (these have been some very tense moments in some Microsoft office somewhere and under enormous pressure, staff was able to find the cause, create a fix and roll it out successfully; I appreciate their efforts and the stress they must have experienced!)

This image from The Web Performance Optimization & Security Blog – generated by Dall-E – illustrates the frantic search for the cause and the solution that must have occurred within the Azure organization on January 21st.

The cloud is someone else’s computer. The mistakes you know you could make in your own IT environment can also be made

by the cloud provider. People are responsible for the Azure systems. People who are experienced and smart and dedicated. And who make mistakes and do not have full insight in how systems behave, how changes can have wide spread impact and what dangers may lurk in year old software. It is understandable this can and will happen. And we should realize that this will happen again. Therefore we have to prepare for it. Outages like this one will occur from time to time. We need to design our strategy and our cloud environment based on that assumption.